Amazon Smart Vehicles / Cognitive Workload

Amazon Smart Vehicles / Cognitive Workload

Measuring Cognitive Workload of Message Composition While Driving

Have you ever been in a deep conversation with a passenger and realized you just missed your highway exit? It turns out that talking while driving is not "free" of cognitive cost—and neither is interacting with an AI assistant. As LLM-powered assistants become standard in vehicle cockpits, quantifying this mental distraction is critical. This study sought to define safe thresholds for voice-based message composition by measuring the hidden "cognitive tax" of speaking versus listening in a high-demand driving environment.

Conducted a high-fidelity simulator study utilizing the Detection Response Task (DRT) to empirically quantify cognitive workload. Employed a controlled experimental design to compare the mental tax of different messaging contexts (social vs. professional) and isolated the specific workload differences between listening (input) and composing (output).

The study revealed a significant cognitive asymmetry: the act of composing and speaking a message imposes a substantially higher mental tax than listening to an AI response. Furthermore, professional/structured messaging tasks significantly increased reaction times on the DRT compared to casual social interaction, establishing that LLM interaction is not cognitively uniform.

THE TOOLKIT: COGNITIVE ANALYSIS

Cognitive Benchmarking

Utilizing two-digit mental arithmetic (e.g., 37+17) as an upper-bound reference task to calibrate and categorize the severity of AI-induced distraction.

ISO-Standardized DRT Analysis

Objectively quantifying secondary-task interference using tactile and visual stimuli according to ISO 17488 cognitive load protocols.

Conversational AI Prototyping

Engineering high-fidelity LLM prompts to simulate the full latency and variance of production-intent voice assistants for early-stage HMI validation.

Amazon Smart Vehicles / Driver Distraction

Amazon Smart Vehicles / Driver Distraction

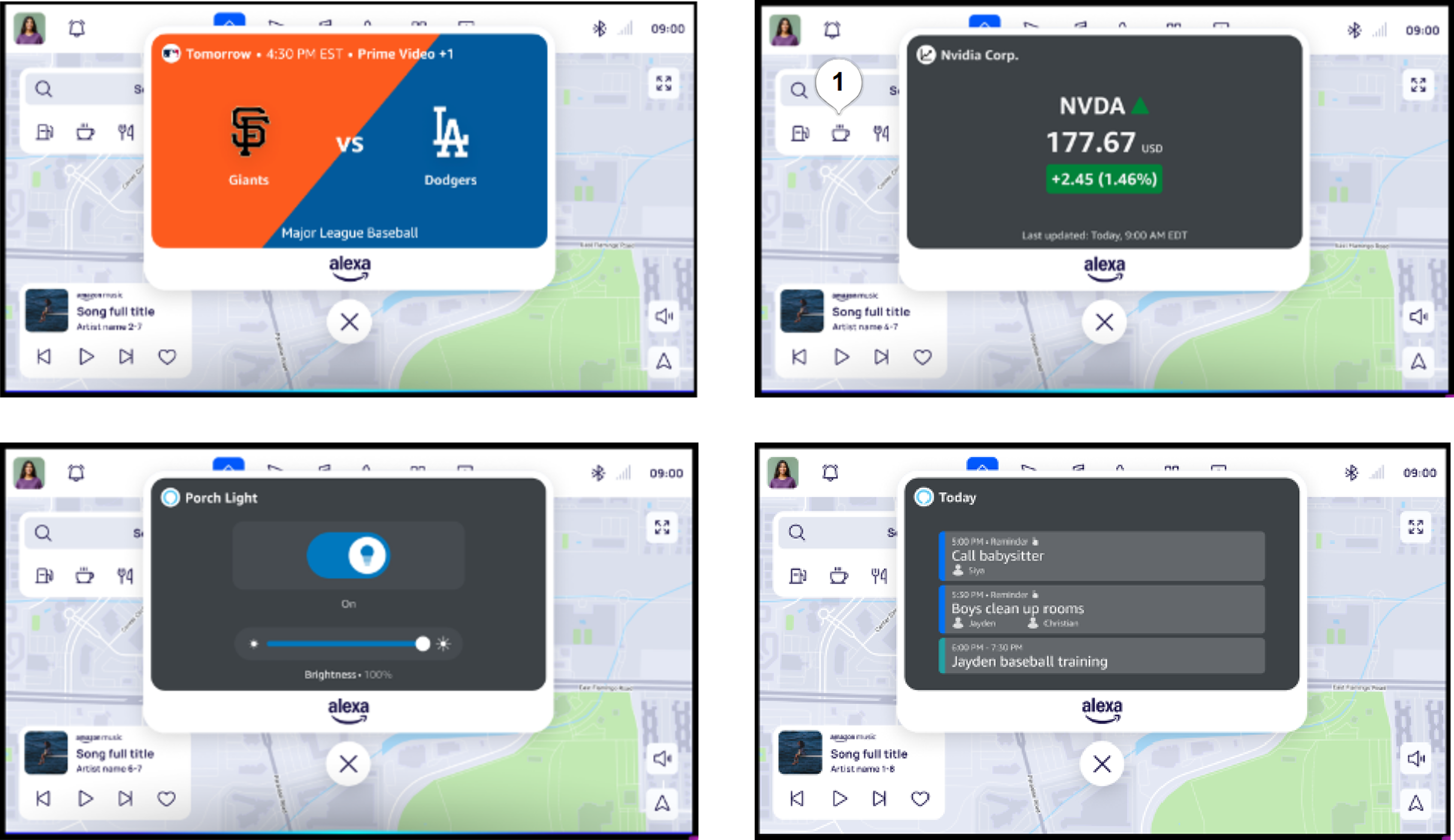

Alexa Display Cards NHTSA Validation

Validating whether concise, voice-triggered cards (Sports, Stocks, Weather, Smart Home) imposed unacceptable levels of visual-manual distraction in a production-intent vehicle.

Executed a formal NHTSA Visual-Manual Driver Distraction test. Leveraging Smart Eye eye-tracking systems, I quantified eyes-off-road glance durations to ensure safety threshold compliance.

All four knowledge domains met NHTSA acceptance criteria. The findings cleared the feature for production by demonstrating that optimized layouts allow for rapid processing without compromising road safety.

THE TOOLKIT: DISTRACTION VALIDATION

Wizard of Oz Testing

Simulating complex AI behaviors with human-in-the-loop controls to test user responses before full system deployment.

Eye-Tracking Analysis

Capturing real-time gaze data with Smart Eye systems to quantify visual distraction and precise glance behavior.

Regulatory Compliance

Expertise in NHTSA Visual-Manual Distraction protocols and international HMI safety standards (ISO/SAE).

Amazon Smart Vehicles / UX Research

Amazon Smart Vehicles / UX Research

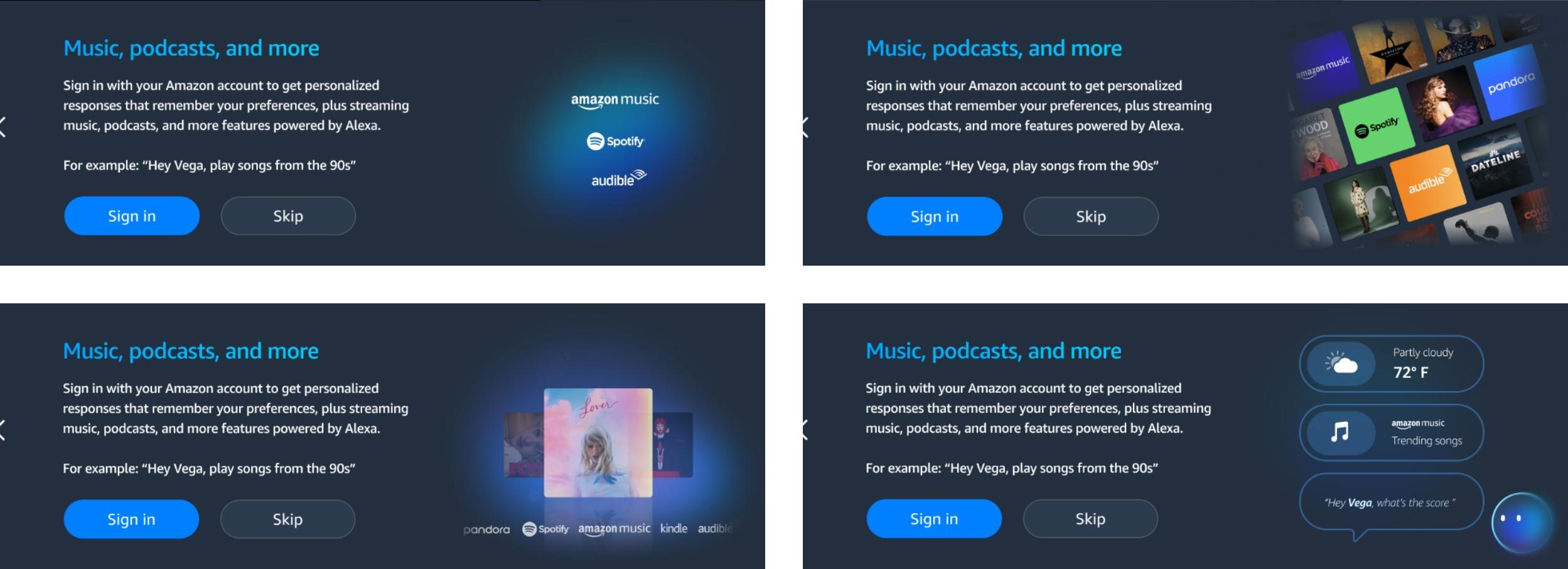

Optimizing In-Vehicle Account Linking

Drivers frequently abandon account linking during setup due to privacy concerns and unclear value. This research analyzed how text and high-fidelity visual value propositions could overcome this friction by effectively communicating immediate utility (e.g., Spotify/Audible access) over data privacy anxiety.

Mixed-methods research consisting of a copy-validation study (n=120) and a between-subjects graphical treatment assessment (n=96). Quantified First-Glance Acceptance (a binary "Sign In" vs. "Skip" decision) to isolate how specific visual value propositions influenced immediate user commitment.

The high-fidelity treatment achieved a 95% sign-in intent rate, representing a 30% increase over the baseline design. This significant lift proved that visual evidence of utility effectively mitigates privacy-driven friction and clarifies the assistant's service integration for users.

THE TOOLKIT: UX STRATEGY

Quantitative Behavioral Testing

Utilizing unmoderated platforms to execute large-scale (n=200+) between-subjects studies, capturing rapid "first-glance" behavioral intent.

Inferential Statistics

Applying Binomial Regression and Wilcoxon Rank-Sum tests to validate that behavioral shifts are statistically significant and reproducible.

Value Prop Modeling

Isolating the interaction between text copy and high-fidelity visual treatments to determine the optimal drivers of user trust and commitment.

Hazard Perception & Training in Drivers with Autism Spectrum Disorder

For young adults with Autism Spectrum Disorder (ASD), obtaining a driver's license is a vital milestone for independent living. However, the unique social and behavioral challenges of ASD can significantly interfere with hazard perception—the ability to identify and respond to risks on the road. This study aimed to quantify these challenges and test if app-based training could bridge the safety gap between ASD and typically developing drivers.

Conducted a longitudinal study with 61 novice drivers across ASD and typically developing cohorts. Leveraging eye-tracking glasses and high-fidelity simulators, we analyzed visual scanning behaviors and vehicle control metrics (lane variability, braking) during both virtual and real-world drives. The study specifically tested the efficacy of the "Drive Focus" iPad application as a hazard training tool.

The research established a significant baseline disparity: ASD drivers detected significantly fewer hazards and exhibited higher lane departure rates than their typically developed teen peers. While the digital training alone did not eliminate this gap, the study provided objective evidence that neurodivergent drivers require more intensive, specialized training protocols to ensure long-term road safety.

THE TOOLKIT: NEURODIVERSITY & SAFETY

Gaze Distribution Analysis

Utilizing Tobii Pro eye-tracking to objectively map hazard detection and visual search patterns during simulated and live-road driving.

Kinematic Behavior Profiling

Quantifying driving performance through high-frequency simulator variables, specifically Standard Deviation of Lane Offset and lane departure frequency.

Situational Awareness Modeling

Applying Endsley’s SA Model to evaluate how drivers perceive, comprehend, and predict road hazards via interactive video-based testing.

Evaluation of External HMIs & Compliance with Federal Safety Standards

As automated vehicles (AVs) enter public roads, the lack of a human driver creates a communication gap—pedestrians no longer receive cues like eye contact or hand waves. While many "External Human-Machine Interfaces" (eHMI) have been proposed to signal intent, most designs violate FMVSS 108, the strict federal standard for vehicle lighting. This study sought to validate a lightbar that is both effective for communication and legally compliant.

Designed a high-fidelity Virtual Reality (VR) simulation in Unreal Engine to test pedestrian responses to AVs at busy intersections. 33 participants evaluated three conditions: a baseline (no lightbar), a compliant White-Amber signal, and a non-compliant White-White signal. Quantified trust, satisfaction (Kano Model), and the number of exposures required to correctly interpret the vehicle's intent.

The study demonstrated that an FMVSS-compliant (White-Amber) lightbar significantly increased user trust and predictability compared to the baseline. Participants learned the signals rapidly (within two exposures), and the Kano analysis categorized the feature as "Attractive," proving that effective AV-pedestrian communication can be achieved without requiring complex new legislation.

THE TOOLKIT: IMMERSIVE VALIDATION

High-Fidelity VR Simulation

Engineering immersive Unreal Engine environments to safely validate AV-pedestrian interactions in complex urban scenarios before real-world deployment.

Kano Satisfaction Modeling

Utilizing the Kano two-dimensional model to categorize HMI features, identifying the specific drivers of user delight and product acceptance.

Regulatory Lighting Compliance

Expert application of FMVSS 108 standards to ensure that experimental HMI concepts meet federal safety, color, and intensity requirements.